While we know you may be tempted to look, no peeking! Looking at test results too early can be misleading and misrepresentative. It’s like trying to predict the NBA season based only on a team’s first game. If we were to do that, we might end up predicting a team will dominate the playoffs when in reality they don’t even make it to the playoffs. To achieve high test confidence, patience is key.

Test confidence in a nutshell

Confidence refers to the level of certainty associated with a result or estimate obtained from data analysis. It is typically expressed as a percentage or range, representing the degree of belief or assurance in the accuracy of the result.

No matter how many weeks the test has run, if testers are looking at results with a confidence level below 50%, they’d be better off flipping a coin to guess the results of the test. If the confidence level is between 50 and 80%, the results are a good indication of direction (whether the initiative is driving a positive or negative lift) and not necessarily a good prediction of the exact lift number. Confidence above 80% is ideal.

Confidence is often low in the early weeks of a test which means that the noise in the data is still being sifted through to find the true result, but even if confidence is high within the first week or two of the test, results should still not be reported on until the test has more time to filter through the noise.

What drives high test confidence?

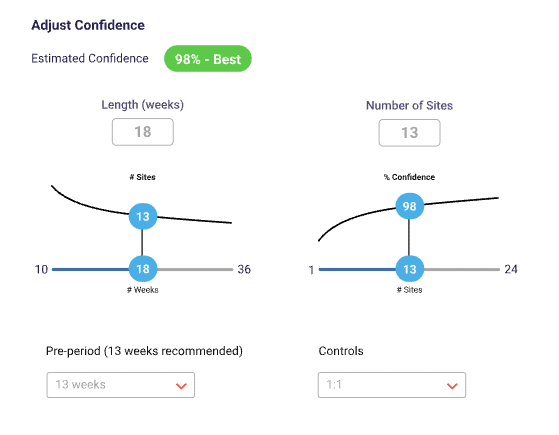

Confidence is often driven by sample size, test length, and lift size. If you would like test results with high confidence, increasing the sample size or test length are always good options.

Sometimes, testers can do everything right in setting up the tests and still have low confidence. At MarketDial, weI have seen tests that have run for multiple years with over 100 sites and still have confidence below 60%. This can be frustrating to some, but it just means that the initiative was not driving significant results.

One interesting driver of confidence is the lift amount. Let’s say a test is showing a 0.5% lift. In most cases, regardless of the sample size and test length, this test will have low confidence. That’s because a lift this small is really difficult to detect.

For example, let’s pretend that the true lift amount that an initiative is driving is a fish in a lake. If that fish is bigger, like a humpback whale, it will be easier to find and we’ll have very high confidence that we’ll find it since it is such a large target. If the fish, however, is a little tadpole, it will be incredibly hard to find, and we would have very low confidence that we would find it at all in a large lake.

Now let’s say the test is one week in and the results so far are showing 10% lift. Naturally, there will be very high confidence since this initiative is already showing large results. That 10% is like a humpback whale, it’s very obvious. That does not mean, however, that the final lift amount at the end of the test will be 10%. It just means that so far, we are finding significant results that are easy to spot.

Even with high confidence in the first week, the lift can change by up to 5% by the end of the test. Not only that, but the confidence doesn’t always stay above 90%. About 16% of tests that had confidence above 90% in the first week dropped to below 75% confidence by the end of the test.

How long should I wait to report results?

In general, it’s best to wait the full length of the test. If that’s not possible, however, it’s typically best to wait at least 4 – 5 weeks.

How do I respond to stakeholders who want to see the results early?

It’s difficult to manage expectations when stakeholders are expecting results after just a week or two. Here are some helpful tips:

- Set expectations early and before the test starts, let them know that you will share results with them after the test has run for multiple weeks.

Example: “Thanks for sending me this information to get that test built for you! I will send you the results when the test has run for 3 weeks. That will be on April 3. Looking forward to talking then!” - Give them a taste of the results but don’t share everything.

Example: “I’m glad you’re so eager to see the results! So far, they are showing a positive lift but as the test goes on, this could always change. I’m planning on sharing more concrete results with you in 3 weeks.” - Educate them on the benefits of waiting to report results.

Example: “I am excited to see the results of this test, too! Data shows that in the first four weeks of a test, there will still be quite a bit of noise and results will begin to stabilize after those initial four weeks. The test will have run for four weeks on April 10 so let’s reconnect then.”

To learn more about how to be confident in your test results, check out these resources:

How to create a statistically valid test

The rhythm and rhyme of retail test implementation

Minimize bias and maximize your test results